Gradient-Domain Metropolis Light Transport

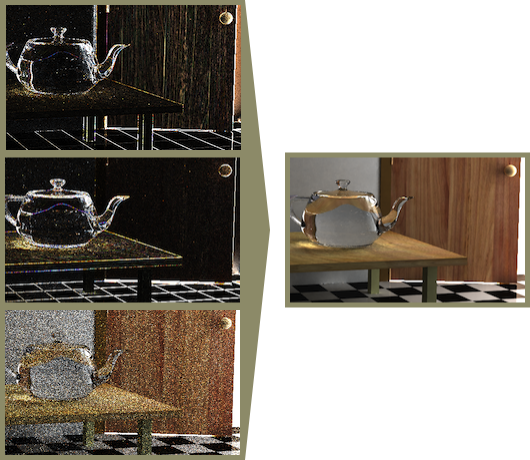

Left side, top to bottom: Gradients of image in the x- and y direction, coarse estimate. Right side: Resulting reconstructed image. Originally rendered at a resolution of 1280x720, 20 minutes rendering time. (Images from: Lehtinen et al., Gradient-Domain Metropolis Light Transport)

Algorithms for Physically-Based Rendering aim to simulate the transport of light in a scene as exactly as possible, so as to generate photorealistic images from 3D scene descriptions. Mathematically, this amounts to solving a complicated recursive integral - the so-called "rendering equation".

Monte-Carlo techniques like Bi-Directional Path Tracing use statistical methods to find an approximate solution, by carefully adding up light paths selected at random - however, they take quite some time to converge to a correct solution and in the presence of complex lighting phenomena such as caustics, it can take very long indeed before the "graininess" introduced by random sampling begins to disappear.

The Gradient-Domain Metropolis Light Transport algorithm tries to mitigate this problem by not rendering an image directly, but instead rendering the gradient of the image - its derivative in the x- and y directions. Additionally, it concentrates path samples in regions that have a high contribution to those gradients. The image is then reconstructed from the gradient and a coarse approximation , resulting in smooth surfaces in areas with a low rate of change and good sampling of areas where the image changes frequently, even when caustics or complex reflections are present in the scene.